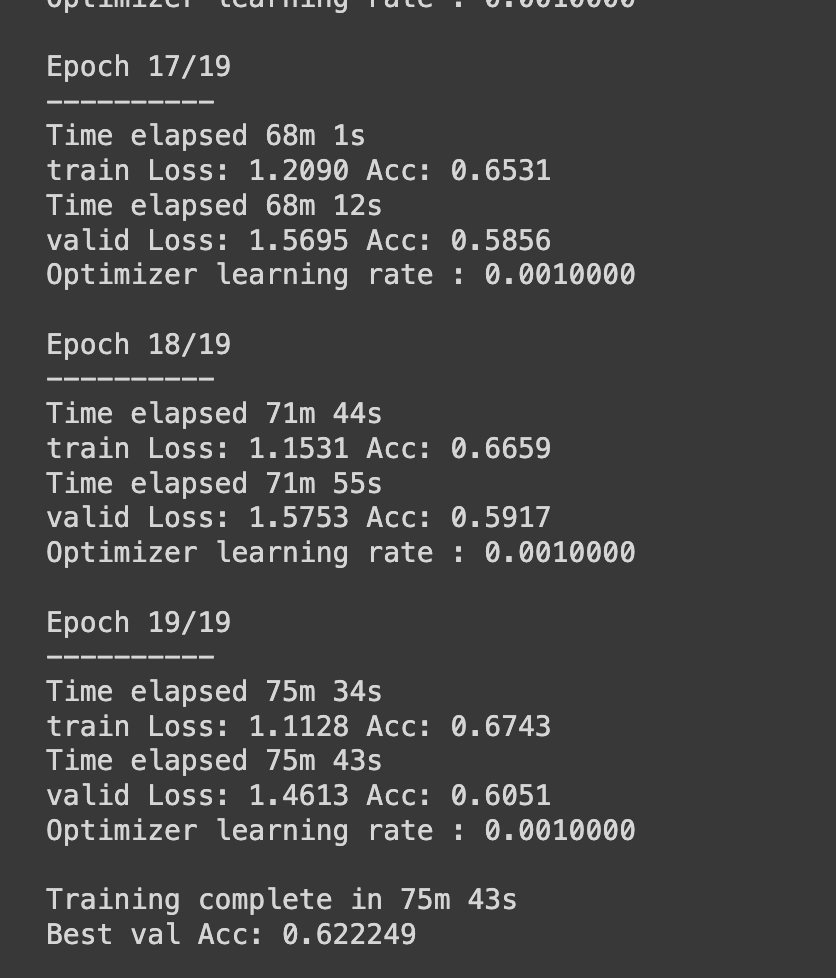

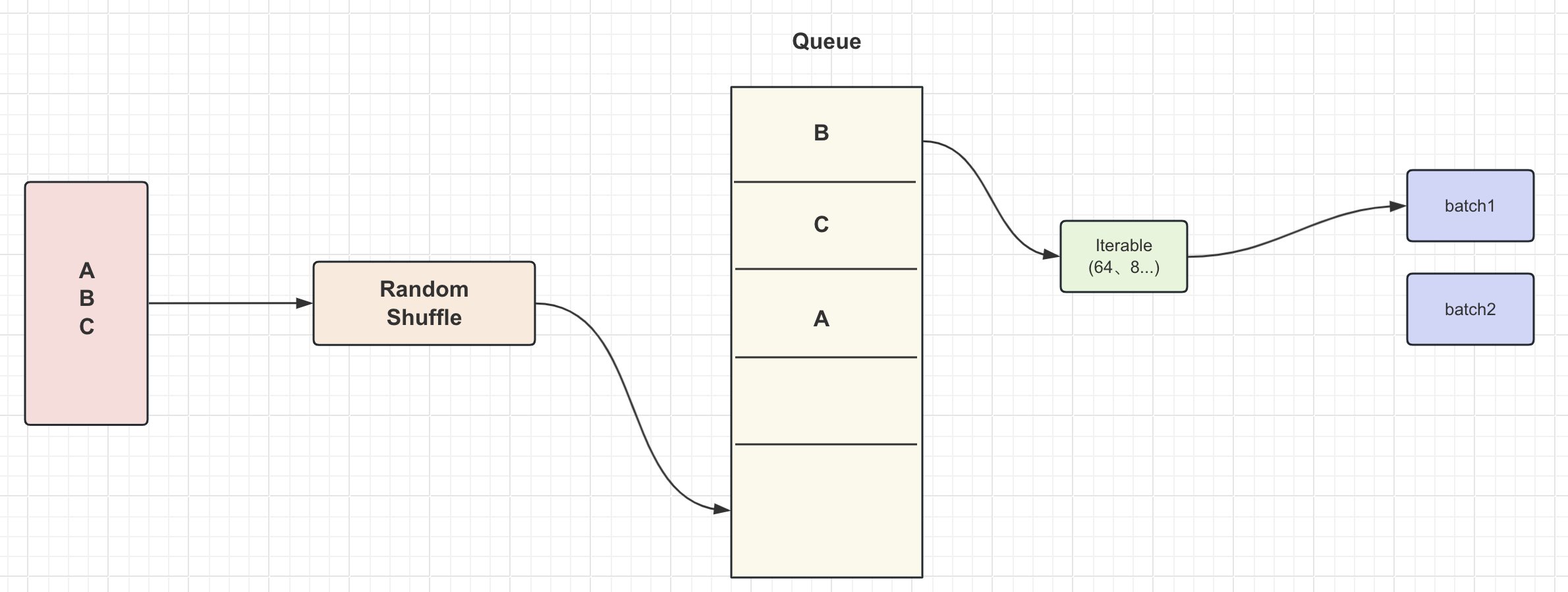

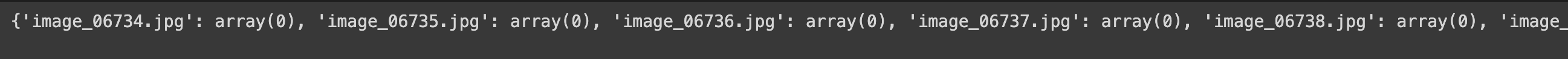

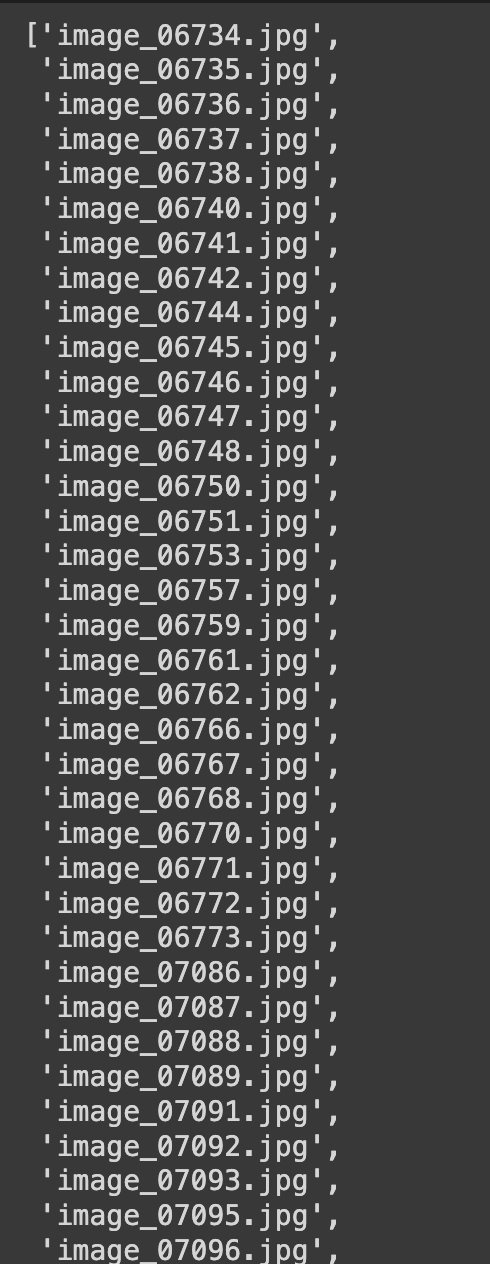

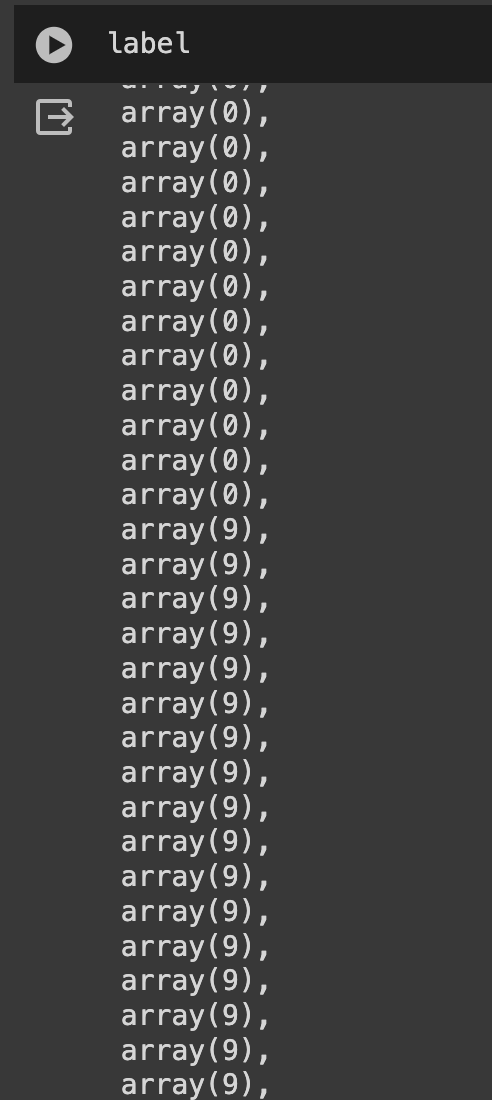

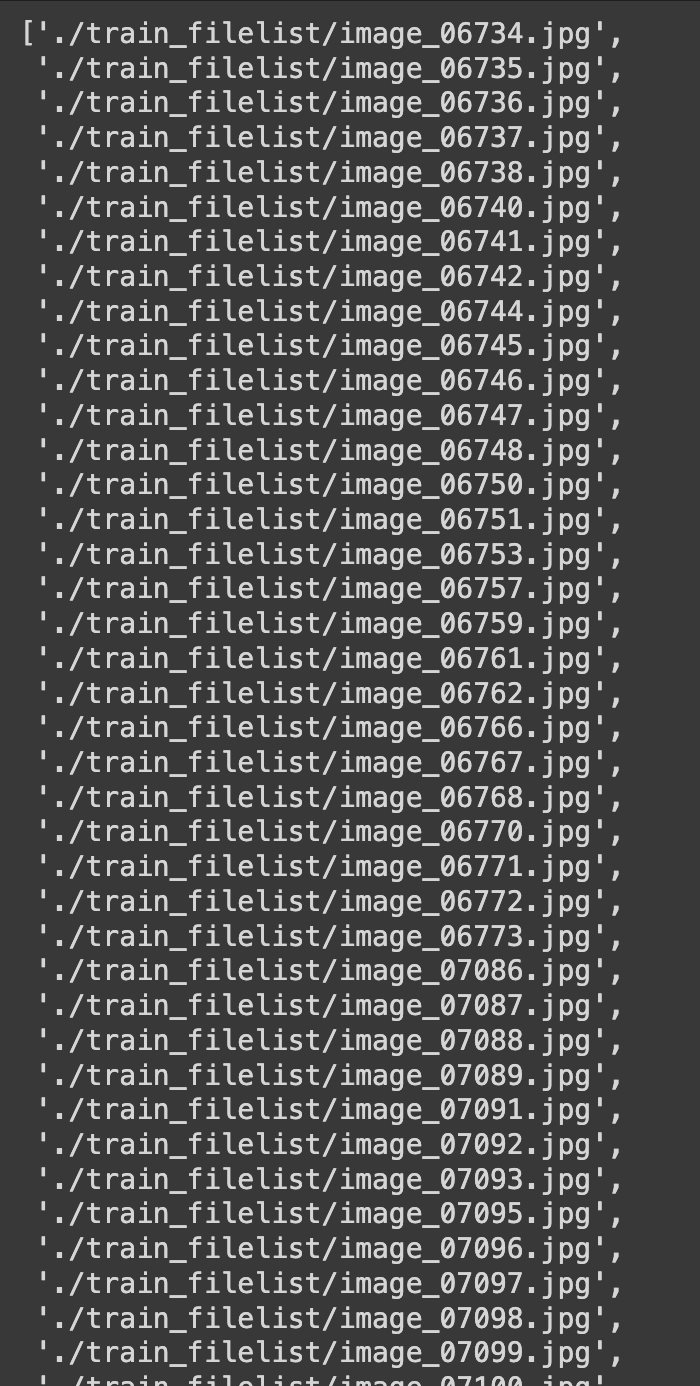

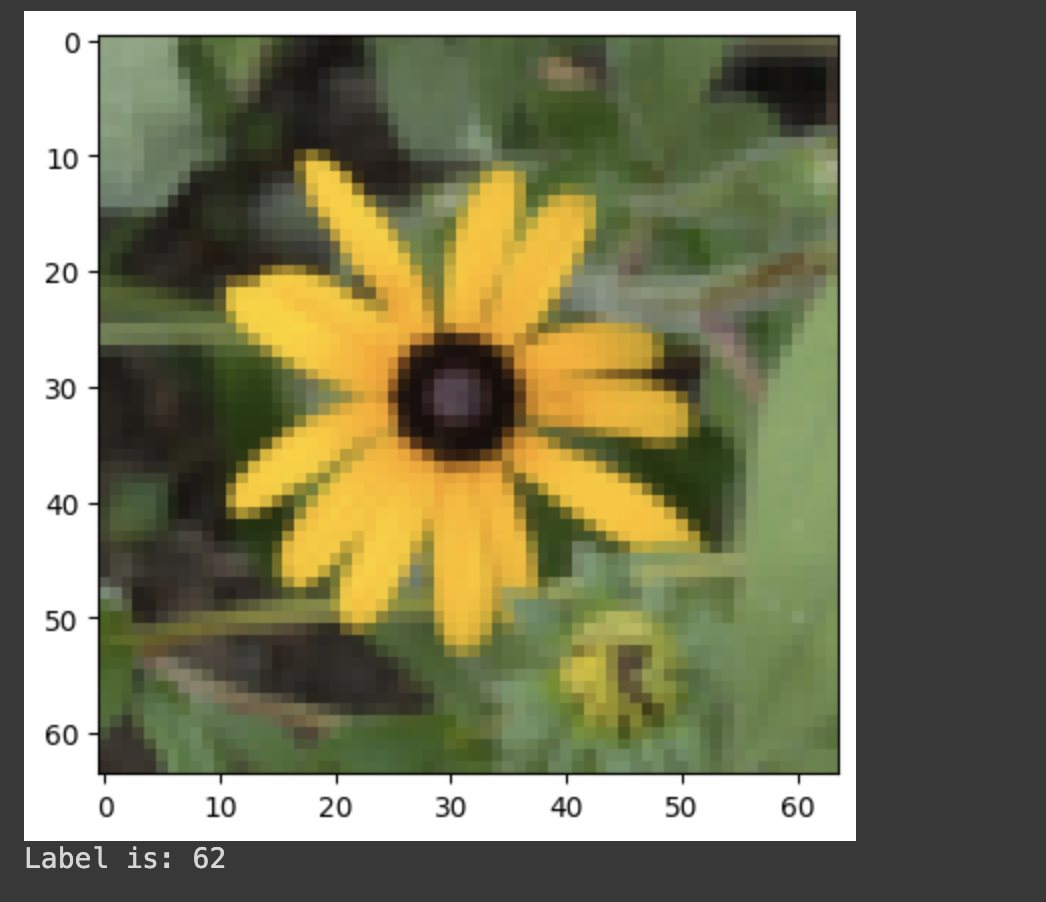

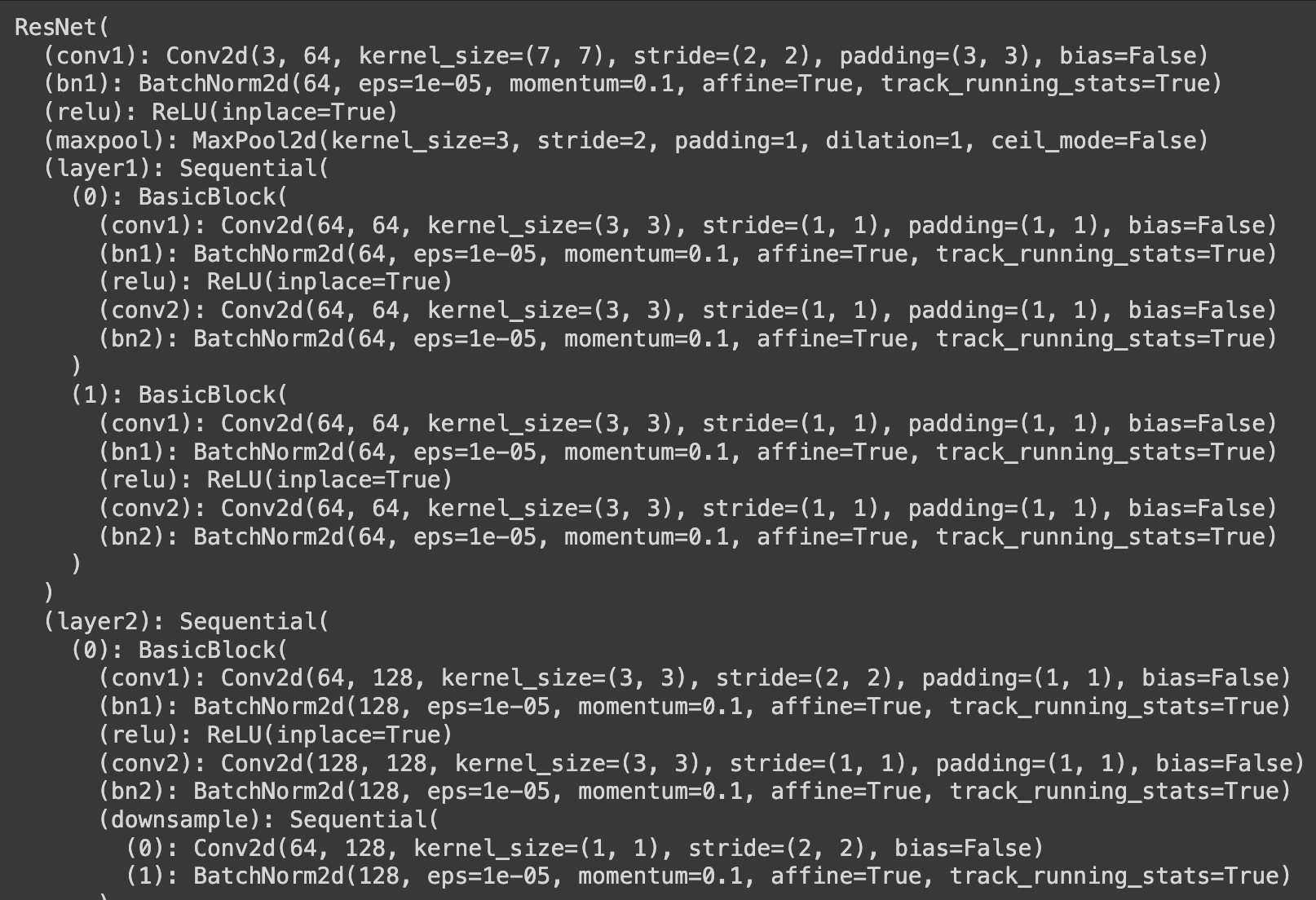

Pytorch 卷积神经网络效果 电脑版发表于:2023/12/29 15:06  >#Pytorch 卷积神经网络效果 [TOC] ## 数据集Dataloader制作 ### 如何自定义数据集: tn2>1.数据和标签的目录结构先搞定(得知道到哪读数据) 2.写好读取数据和标签路径的函数(根据自己数据集情况来写) 3.完成单个数据与标签读取函数(给dataloader举一个例子) ### 咱们以花朵数据集为例: tn2>原来数据集都是以文件夹为类别ID,现在咱们换一个套路,用txt文件指定数据路径与标签(实际情况基本都这样) 这回咱们的任务就是在txt文件中获取图像路径与标签,然后把他们交给dataloader 核心代码非常简单,按照对应格式传递需要的数据和标签就可以啦  ```python import os import matplotlib.pyplot as plt %matplotlib inline import numpy as np import torch from torch import nn import torch.optim as optim import torchvision #pip install torchvision from torchvision import transforms, models, datasets #https://pytorch.org/docs/stable/torchvision/index.html import imageio import time import warnings import random import sys import copy import json from PIL import Image ``` ## 先来分细节整明白咱一会要干啥! #### 任务1:读取txt文件中的路径和标签 tn2>第一个小任务,从标注文件中读取数据和标签 至于你准备存成什么格式,都可以的,一会能取出来东西就行 ```python def load_annotations(ann_file): """ 加载数据标签的方法 """ # 定义一个集合 data_infos = {} with open(ann_file) as f: # 数据格式:image_06739.jpg 0 # 读取每一行,strip方法去掉\r\n,split进行空格分割字符串 samples = [x.strip().split(' ') for x in f.readlines()] # 存入集合中去 for filename, gt_label in samples: # 使用np.array进行数据处理 data_infos[filename] = np.array(gt_label, dtype=np.int64) return data_infos ``` ```python print(load_annotations('./train.txt')) ```  #### 任务2:分别把数据和标签都存在list里 tn2>不是我非让你存list里,因为dataloader到时候会在这里取数据 按照人家要求来,不要耍个性,让整list咱就给人家整 ```python img_label = load_annotations('./train.txt') ``` ```python # 将图片名字转成list格式 image_name = list(img_label.keys()) # 将图片标签转成list格式 label = list(img_label.values()) ``` ```python image_name ```  ```python label ```  #### 任务3:图像数据路径得完整 tn2>因为一会咱得用这个路径去读数据,所以路径得加上前缀 以后大家任务不同,数据不同,怎么加你看着来就行,反正得能读到图像 ```python data_dir = './' train_dir = data_dir + 'train_filelist' valid_dir = data_dir + 'val_filelist' ``` ```python # 路径拼接 join 第一个参数合并路径的前缀,通过遍历图片集进行拼接。 image_path = [os.path.join(train_dir,img) for img in image_name] image_path ```  #### 任务4:把上面那几个事得写在一起 tn2>1.注意要使用from torch.utils.data import Dataset, DataLoader 2.类名定义class FlowerDataset(Dataset),其中FlowerDataset可以改成自己的名字 3.def __init__(self, root_dir, ann_file, transform=None):咱们要根据自己任务重写 4.def __getitem__(self, idx):根据自己任务,返回图像数据和标签数据 ```python from torch.utils.data import Dataset, DataLoader class FlowerDataset(Dataset): def __init__(self, root_dir, ann_file, transform=None): # 获取标签文件路径 self.ann_file = ann_file # 获取当前数据路径 self.root_dir = root_dir # 获取数据与标签 self.img_label = self.load_annotations() # 获取相对图片路径 self.img = [os.path.join(self.root_dir,img) for img in list(self.img_label.keys())] # 获取标签 self.label = [label for label in list(self.img_label.values())] # 预处理 self.transform = transform def __len__(self): return len(self.img) def __getitem__(self, idx): """ idx是随机id,根据idx获取数据和标签 """ # 读取图片文件 image = Image.open(self.img[idx]) # 读取标签 label = self.label[idx] # 处理图像数据 if self.transform: image = self.transform(image) # 将numpy格式转成tensor对格式 label = torch.from_numpy(np.array(label)) # 返回图像数据和标签 return image, label def load_annotations(self): """ 加载数据标签的方法 """ data_infos = {} with open(self.ann_file) as f: samples = [x.strip().split(' ') for x in f.readlines()] for filename, gt_label in samples: data_infos[filename] = np.array(gt_label, dtype=np.int64) return data_infos ``` #### 任务5:数据预处理(transform) tn2>1.预处理的事都在上面的__getitem__中完成,需要对图像和标签咋咋地的,要整啥事,都在上面整 2.返回的数据和标签就是建模时模型的输入和损失函数中标签的输入,一定整明白自己模型要啥 3.预处理这个事是你定的,不同的数据需要的方法也不一样,下面给出的是比较通用的方法 ```python data_transforms = { 'train': transforms.Compose([ transforms.Resize(64), transforms.RandomRotation(45),#随机旋转,-45到45度之间随机选 transforms.CenterCrop(64),#从中心开始裁剪 transforms.RandomHorizontalFlip(p=0.5),#随机水平翻转 选择一个概率概率 transforms.RandomVerticalFlip(p=0.5),#随机垂直翻转 transforms.ColorJitter(brightness=0.2, contrast=0.1, saturation=0.1, hue=0.1),#参数1为亮度,参数2为对比度,参数3为饱和度,参数4为色相 transforms.RandomGrayscale(p=0.025),#概率转换成灰度率,3通道就是R=G=B transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])#均值,标准差 ]), 'valid': transforms.Compose([ transforms.Resize(64), transforms.CenterCrop(64), transforms.ToTensor(), transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]) ]), } ``` #### 任务6:根据写好的class FlowerDataset(Dataset):来实例化咱们的dataloader tn2>1.构建数据集:分别创建训练和验证用的数据集(如果需要测试集也一样的方法) 2.用Torch给的DataLoader方法来实例化(batch啥的自己定,根据你的显存来选合适的) 3.打印看看数据里面是不是有东西了 ```python # 训练集 图片数据、数据标签、预处理(数据增强) train_dataset = FlowerDataset(root_dir=train_dir, ann_file = './train.txt', transform=data_transforms['train']) ``` ```python # 验证集 val_dataset = FlowerDataset(root_dir=valid_dir, ann_file = './val.txt', transform=data_transforms['valid']) ``` ```python # 创建DataLoader 训练集和验证集,每次压入cpu的byte,是否随机 train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True) val_loader = DataLoader(val_dataset, batch_size=64, shuffle=True) ``` ```python len(train_dataset) ``` >6552 ```python len(val_dataset) ``` >818 #### 任务7:用之前先试试,整个数据和标签对应下,看看对不对 tn2>1.别着急往模型里传,对不对都不知道呢 2.用这个方法:iter(train_loader).next()来试试,得到的数据和标签是啥 3.看不出来就把图画出来,标签打印出来,确保自己整的数据集没啥问题 ```python # iter迭代一次 next取一个byte数据 image, label = next(iter(train_loader)) image.shape ``` >torch.Size([64, 3, 64, 64]) tn2>第一个64是byte 最后两个64是长和宽 ```python image, label = next(iter(train_loader)) # 取其中一个数据squeeze压缩一个维度,举例:1*3*64*64 压缩后 3*64*64 sample = image[0].squeeze() # 根据索引替换位置,换成numpy格式 sample = sample.permute((1, 2, 0)).numpy() # 还原图,因为当时做了均值差 transforms.Normalize sample *= [0.229, 0.224, 0.225] sample += [0.485, 0.456, 0.406] # 展示 plt.imshow(sample) plt.show() print('Label is: {}'.format(label[0].numpy())) ```  ```python # 验证集 image, label = next(iter(val_loader)) sample = image[0].squeeze() sample = sample.permute((1, 2, 0)).numpy() sample *= [0.229, 0.224, 0.225] sample += [0.485, 0.456, 0.406] plt.imshow(sample) plt.show() print('Label is: {}'.format(label[0].numpy())) ```  #### 任务8:咋用就是你来定了,把模型啥的整好往里面传吧 tn2>下面这些事之前都唠过了,按照自己习惯的方法整就得了 ```python # 放入dataloader dataloaders = {'train':train_loader,'valid':val_loader} ``` ```python model_name = 'resnet' #可选的比较多 ['resnet', 'alexnet', 'vgg', 'squeezenet', 'densenet', 'inception'] #是否用人家训练好的特征来做 feature_extract = True ``` ```python # 是否用GPU训练 train_on_gpu = torch.cuda.is_available() if not train_on_gpu: print('CUDA is not available. Training on CPU ...') else: print('CUDA is available! Training on GPU ...') device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu") ``` tn2>CUDA is not available. Training on CPU ... ```python model_ft = models.resnet18() model_ft ```  ```python num_ftrs = model_ft.fc.in_features model_ft.fc = nn.Sequential(nn.Linear(num_ftrs, 102)) input_size = 64 model_ft ```  ```python # 优化器设置 optimizer_ft = optim.Adam(model_ft.parameters(), lr=1e-3) scheduler = optim.lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)#学习率每7个epoch衰减成原来的1/10 criterion = nn.CrossEntropyLoss() ``` ```python def train_model(model, dataloaders, criterion, optimizer, num_epochs=25, is_inception=False, filename='best.pth'): since = time.time() best_acc = 0 model.to(device) val_acc_history = [] train_acc_history = [] train_losses = [] valid_losses = [] LRs = [optimizer.param_groups[0]['lr']] best_model_wts = copy.deepcopy(model.state_dict()) for epoch in range(num_epochs): print('Epoch {}/{}'.format(epoch, num_epochs - 1)) print('-' * 10) # 训练和验证 for phase in ['train', 'valid']: if phase == 'train': model.train() # 训练 else: model.eval() # 验证 running_loss = 0.0 running_corrects = 0 # 把数据都取个遍 for inputs, labels in dataloaders[phase]: inputs = inputs.to(device) labels = labels.to(device) # 清零 optimizer.zero_grad() # 只有训练的时候计算和更新梯度 with torch.set_grad_enabled(phase == 'train'): outputs = model(inputs) loss = criterion(outputs, labels) _, preds = torch.max(outputs, 1) #print(loss) # 训练阶段更新权重 if phase == 'train': loss.backward() optimizer.step() # 计算损失 running_loss += loss.item() * inputs.size(0) running_corrects += torch.sum(preds == labels.data) epoch_loss = running_loss / len(dataloaders[phase].dataset) epoch_acc = running_corrects.double() / len(dataloaders[phase].dataset) time_elapsed = time.time() - since print('Time elapsed {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60)) print('{} Loss: {:.4f} Acc: {:.4f}'.format(phase, epoch_loss, epoch_acc)) # 得到最好那次的模型 if phase == 'valid' and epoch_acc > best_acc: best_acc = epoch_acc best_model_wts = copy.deepcopy(model.state_dict()) state = { 'state_dict': model.state_dict(),#字典里key就是各层的名字,值就是训练好的权重 'best_acc': best_acc, 'optimizer' : optimizer.state_dict(),#优化器的状态信息 } torch.save(state, filename) if phase == 'valid': val_acc_history.append(epoch_acc) valid_losses.append(epoch_loss) scheduler.step(epoch_loss)#学习率衰减 if phase == 'train': train_acc_history.append(epoch_acc) train_losses.append(epoch_loss) print('Optimizer learning rate : {:.7f}'.format(optimizer.param_groups[0]['lr'])) LRs.append(optimizer.param_groups[0]['lr']) print() time_elapsed = time.time() - since print('Training complete in {:.0f}m {:.0f}s'.format(time_elapsed // 60, time_elapsed % 60)) print('Best val Acc: {:4f}'.format(best_acc)) # 训练完后用最好的一次当做模型最终的结果,等着一会测试 model.load_state_dict(best_model_wts) return model, val_acc_history, train_acc_history, valid_losses, train_losses, LRs ``` ```python model_ft, val_acc_history, train_acc_history, valid_losses, train_losses, LRs = train_model(model_ft, dataloaders, criterion, optimizer_ft, num_epochs=20, filename='best.pth') ```